Introduction

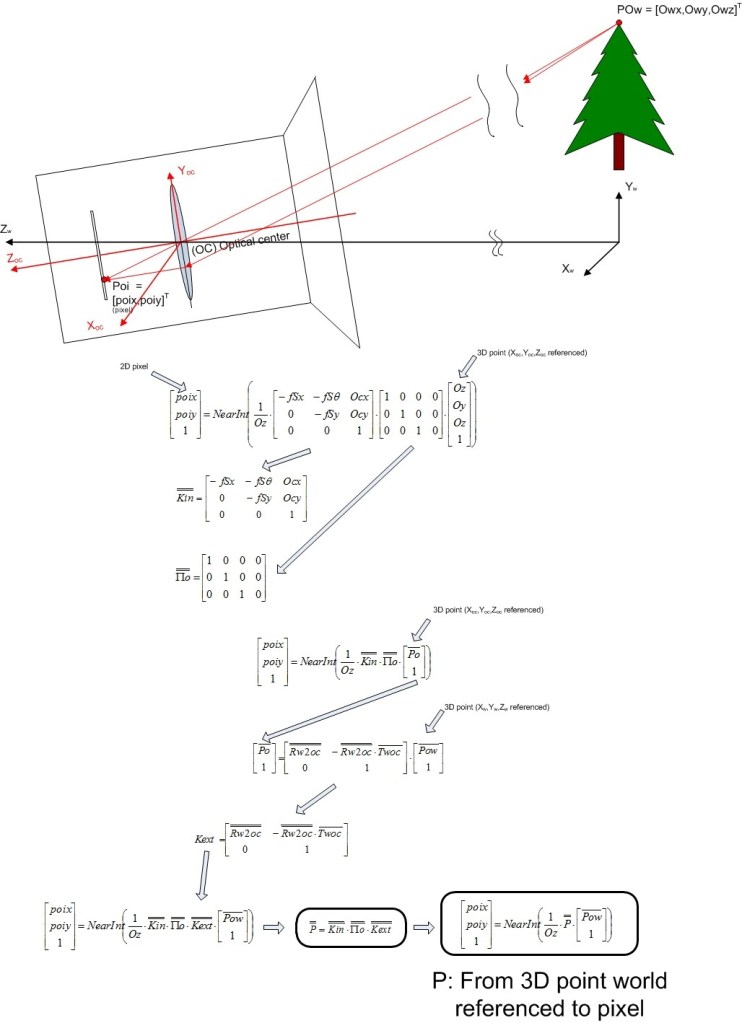

The projection matrix P is the matrix used to convert a 3D world referenced point into a pixel (poi).

The P matrix is based in following parameters:

- Kin

- f

- CCD size (width, height)

- CCD pixels (hoz, vert)

- Skew angle

- Kext

- Camera position vector Twoc (world referenced)

- Camera rotation (yaw, pitch, roll angles) respect world axis (Rwoc)

Demonstration

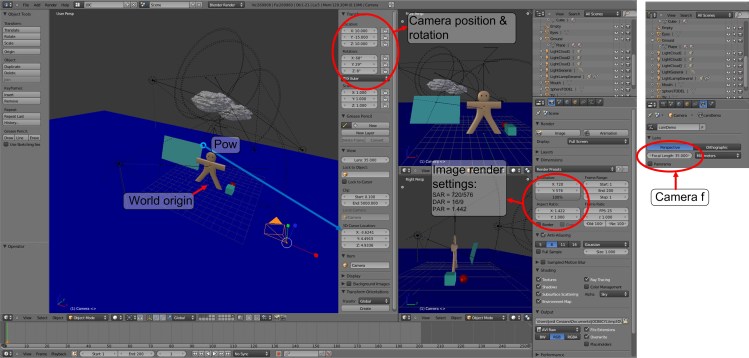

The figure 2 is a 3D blender generated scene, we know the value of Pow = [-3.6241, 4.4915, 4.9336] (blue screen upper right corner), and we know the following parameters about camera:

- f: 35mm (extracted from blender)

- CCD size horizontal: 32mm (extracted from blender, is hard coded)

- CCD size vertical: 32*(9/16) = 18mm (calculated from CCD horizontal size assuming DAR = 16/9)

- CCD pixels: 720×576 (render image pixels)

- Camera position: [10 , -15, 10] (world referenced) (extracted from blender)

- Skew angle = 90º (suppose a good aligned CCD)

- Camera rotation (ordered) (in blender this rotation order is named ZYX Euler

- Yaw (Z): +8º

- Pitch (Y): +29º

- Roll (X): +68º

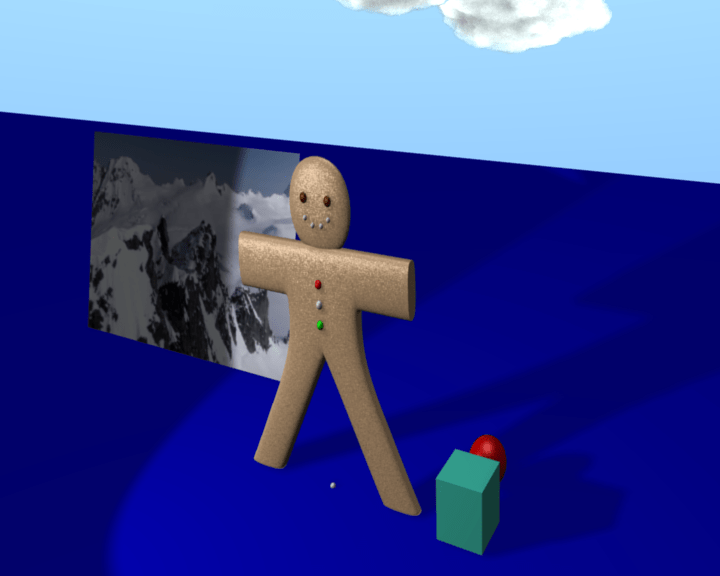

In the figure 3 we can see the rendered image, you can download the image and measure that the upper right corner pixel of the screen (Pow) is [299, 154]

But the image of figure 3 is NOT the captured image in virtual CCD of blender, the image captured by virtual CCD (front view) is vertically flipped, see figure 4

When we define intrinsics matrix we have used the axis of CCD front view, where the image appears vertically inverted respect stored image (figure 3).

If we invert vertically the rendered image we will see that the pixel corresponding to Pow is [299, 422].

And finally only have to check if the calculated pixel is the same as rendered pixel, see figure 5.

To simplify these steps you can use the following matlab function that calcs the pixel position (and other camera parameters):

Using this calculus, we can know which will be the correspondence pixel of any point of the real world if we have the following parameters:

- OC (Origin of coordinates: World origin)

- Pow: 3D Point location (world referenced)

- Camera f

- CCD size (horizontal, vertical) or camera FOV

- CCD pixels (horizontal, vertical)

- Twoc: Camera location (world referenced)

- CCD Skew angle (rarely used. Default = 90º)

- Camera rotation in X,Y,Z (ordered)

If we have the camera projection matrix (P) will be enough to calculate the pixel correspondence of any Pow, the P matrix has embedded the following parameters: OC, f, CCD size, CCD pixels, Twoc, CCD skew angle, and camera rotations.